a0cf5566d88533c5caa7a490beb6eb0760eee9b4,torch/optim/sgd.py,SGD,step,#SGD#Any#,76

Before Change

d_p = p.grad

if weight_decay != 0:

d_p = d_p.add(p, alpha=weight_decay)

if momentum != 0:

param_state = self.state[p]

if "momentum_buffer" not in param_state:

buf = param_state["momentum_buffer"] = torch.clone(d_p).detach()

else:

buf = param_state["momentum_buffer"]

buf.mul_(momentum).add_(d_p, alpha=1 - dampening)

if nesterov:

d_p = d_p.add(buf, alpha=momentum)

else:

d_p = buf

p.add_(d_p, alpha=-group["lr"])

return loss

After Change

loss = closure()

for group in self.param_groups:

params_with_grad = []

d_p_list = []

momentum_buffer_list = []

weight_decay = group["weight_decay"]

momentum = group["momentum"]

dampening = group["dampening"]

nesterov = group["nesterov"]

lr = group["lr"]

for p in group["params"]:

if p.grad is not None:

params_with_grad.append(p)

d_p_list.append(p.grad)

state = self.state[p]

if "momentum_buffer" not in state:

momentum_buffer_list.append(None)

else:

momentum_buffer_list.append(state["momentum_buffer"])

F.sgd(params_with_grad,

d_p_list,

momentum_buffer_list,

weight_decay,

momentum,

lr,

dampening,

nesterov)

// update momentum_buffers in state

for p, momentum_buffer in zip(params_with_grad, momentum_buffer_list):

state = self.state[p]

state["momentum_buffer"] = momentum_buffer

return loss

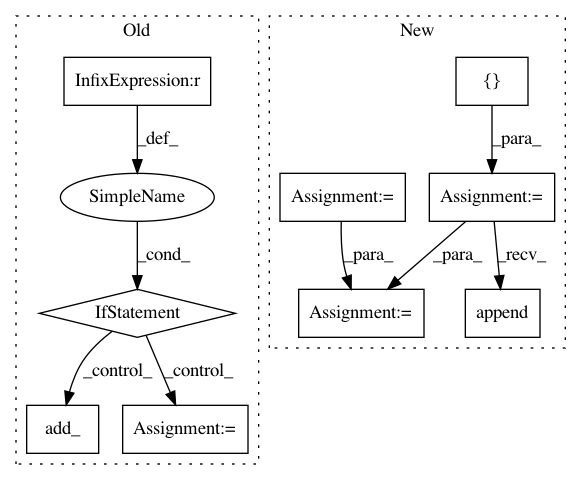

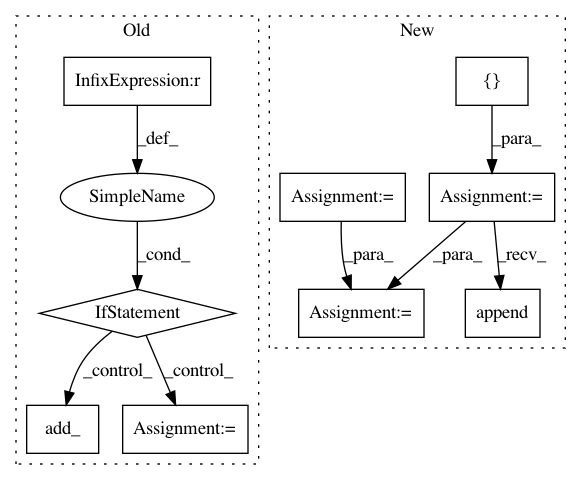

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 9

Instances

Project Name: pytorch/pytorch

Commit Name: a0cf5566d88533c5caa7a490beb6eb0760eee9b4

Time: 2021-01-21

Author: wanchaol@users.noreply.github.com

File Name: torch/optim/sgd.py

Class Name: SGD

Method Name: step

Project Name: pytorch/fairseq

Commit Name: f8377a704cda050dec1376d6bea9a8888ed57fbf

Time: 2018-09-30

Author: myleott@fb.com

File Name: fairseq/sequence_generator.py

Class Name: SequenceGenerator

Method Name: _decode