dcef47168e1d630fe02634f6a542df0c3571228d,tensorlayer/layers.py,BiDynamicRNNLayer,__init__,#BiDynamicRNNLayer#Any#Any#Any#Any#Any#Any#Any#Any#Any#Any#Any#Any#Any#Any#,4132

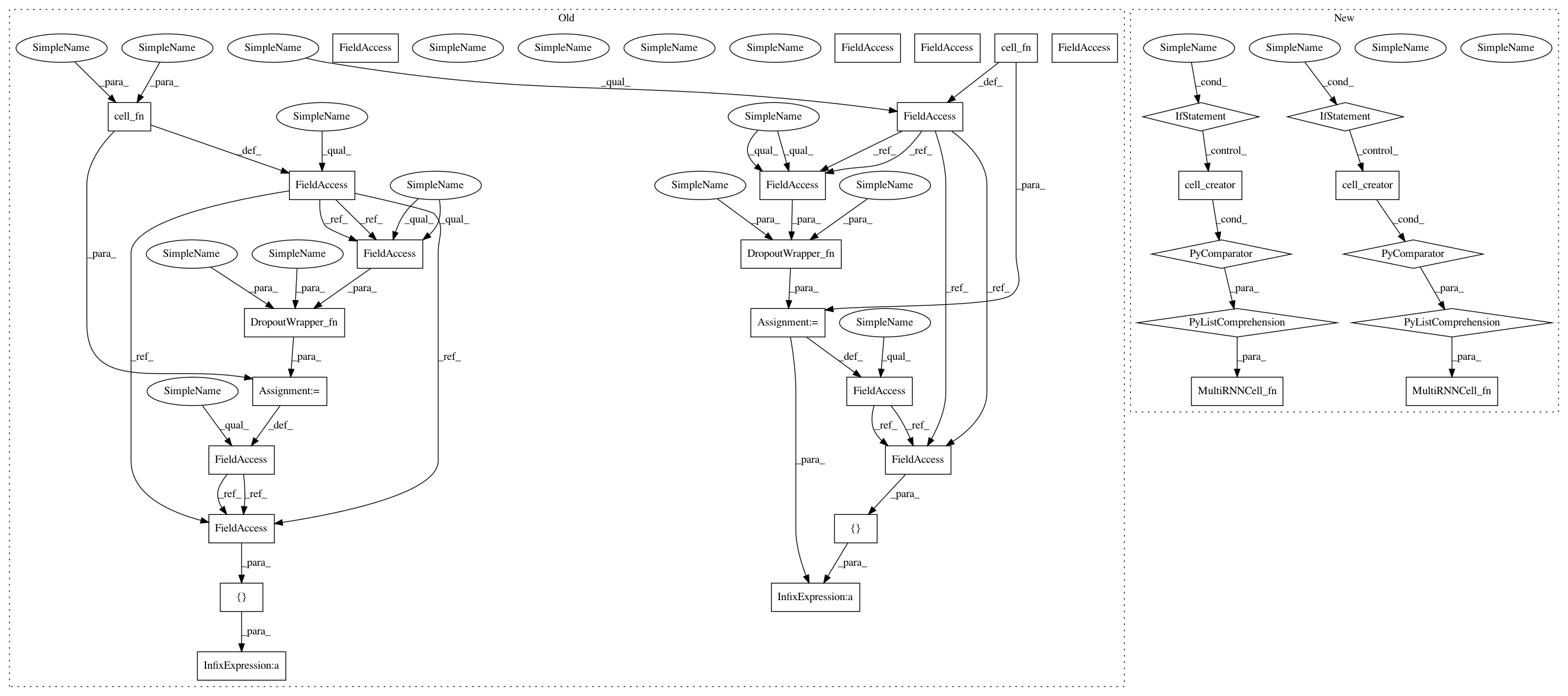

Before Change

with tf.variable_scope(name, initializer=initializer) as vs:

// Creats the cell function

// cell_instance_fn=lambda: cell_fn(num_units=n_hidden, **cell_init_args) // HanSheng

self.fw_cell = cell_fn(num_units=n_hidden, **cell_init_args)

self.bw_cell = cell_fn(num_units=n_hidden, **cell_init_args)

// Apply dropout

if dropout:

if type(dropout) in [tuple, list]:

in_keep_prob = dropout[0]

out_keep_prob = dropout[1]

elif isinstance(dropout, float):

in_keep_prob, out_keep_prob = dropout, dropout

else:

raise Exception("Invalid dropout type (must be a 2-D tuple of "

"float)")

try:

DropoutWrapper_fn = tf.contrib.rnn.DropoutWrapper

except:

DropoutWrapper_fn = tf.nn.rnn_cell.DropoutWrapper

// cell_instance_fn1=cell_instance_fn // HanSheng

// cell_instance_fn=lambda: DropoutWrapper_fn(

// cell_instance_fn1(),

// input_keep_prob=in_keep_prob,

// output_keep_prob=out_keep_prob)

self.fw_cell = DropoutWrapper_fn(

self.fw_cell,

input_keep_prob=in_keep_prob,

output_keep_prob=out_keep_prob)

self.bw_cell = DropoutWrapper_fn(

self.bw_cell,

input_keep_prob=in_keep_prob,

output_keep_prob=out_keep_prob)

// Apply multiple layers

if n_layer > 1:

try:

MultiRNNCell_fn = tf.contrib.rnn.MultiRNNCell

except:

MultiRNNCell_fn = tf.nn.rnn_cell.MultiRNNCell

// cell_instance_fn2=cell_instance_fn // HanSheng

// cell_instance_fn=lambda: MultiRNNCell_fn([cell_instance_fn2() for _ in range(n_layer)])

self.fw_cell = MultiRNNCell_fn([self.fw_cell] * n_layer)

self.bw_cell = MultiRNNCell_fn([self.bw_cell] * n_layer)

// self.fw_cell=cell_instance_fn()

// self.bw_cell=cell_instance_fn()

// Initial state of RNN

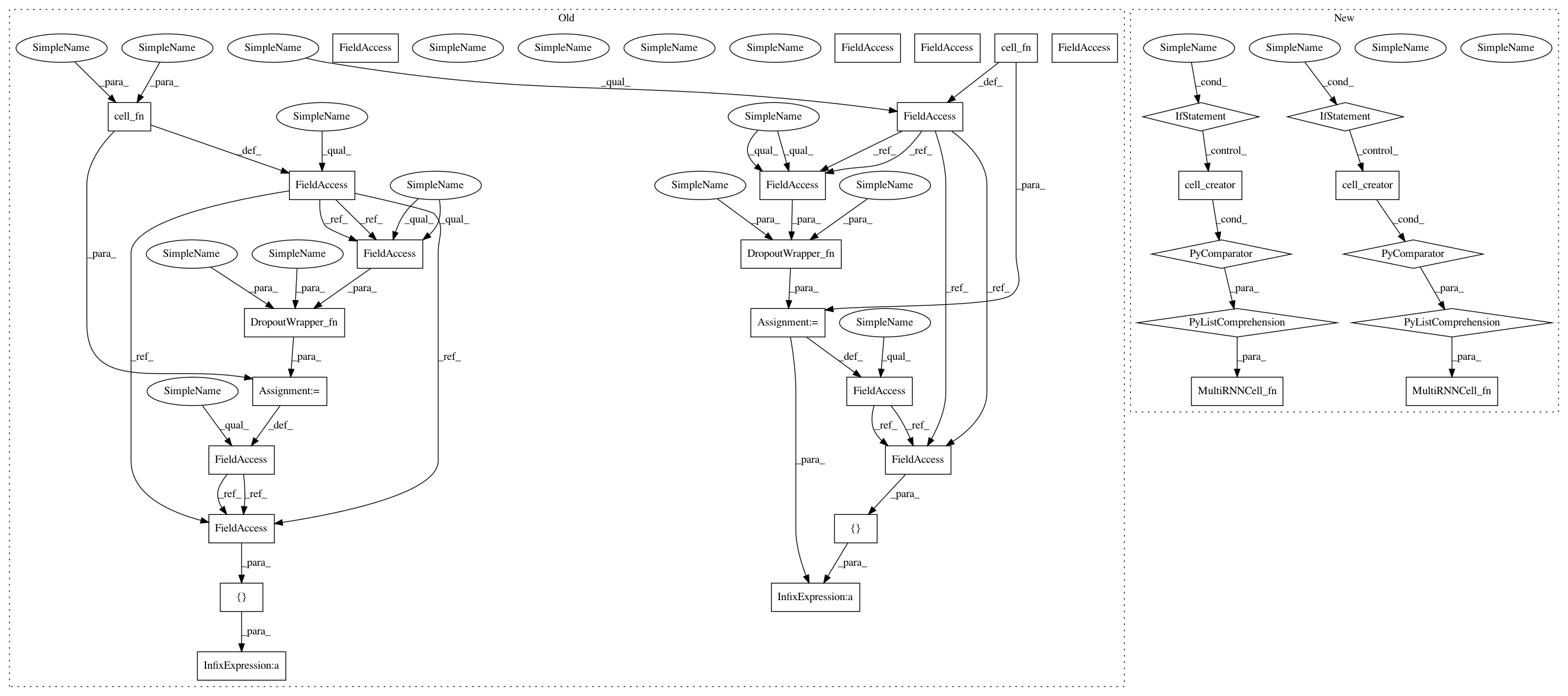

After Change

// cell_instance_fn2=cell_instance_fn // HanSheng

// cell_instance_fn=lambda: MultiRNNCell_fn([cell_instance_fn2() for _ in range(n_layer)])

self.fw_cell = MultiRNNCell_fn([cell_creator() for _ in range(n_layer)])

self.bw_cell = MultiRNNCell_fn([cell_creator() for _ in range(n_layer)])

// self.fw_cell=cell_instance_fn()

// self.bw_cell=cell_instance_fn()

// Initial state of RNN

if fw_initial_state is None:

In pattern: SUPERPATTERN

Frequency: 4

Non-data size: 32

Instances

Project Name: tensorlayer/tensorlayer

Commit Name: dcef47168e1d630fe02634f6a542df0c3571228d

Time: 2017-05-15

Author: chentao904@163.com

File Name: tensorlayer/layers.py

Class Name: BiDynamicRNNLayer

Method Name: __init__

Project Name: tensorlayer/tensorlayer

Commit Name: dcef47168e1d630fe02634f6a542df0c3571228d

Time: 2017-05-15

Author: chentao904@163.com

File Name: tensorlayer/layers.py

Class Name: BiDynamicRNNLayer

Method Name: __init__

Project Name: tensorlayer/tensorlayer

Commit Name: dcef47168e1d630fe02634f6a542df0c3571228d

Time: 2017-05-15

Author: chentao904@163.com

File Name: tensorlayer/layers.py

Class Name: BiRNNLayer

Method Name: __init__

Project Name: tensorlayer/srgan

Commit Name: edc5cf0c0e22b02d52de1b2e3ab89daceaa70c97

Time: 2017-06-06

Author: dhsig552@163.com

File Name: tensorlayer/layers.py

Class Name: BiRNNLayer

Method Name: __init__

Project Name: tensorlayer/srgan

Commit Name: edc5cf0c0e22b02d52de1b2e3ab89daceaa70c97

Time: 2017-06-06

Author: dhsig552@163.com

File Name: tensorlayer/layers.py

Class Name: BiDynamicRNNLayer

Method Name: __init__