c8f075268f7e5645a77eef21591a62a07e7e8baa,hypergan/trainers/sgd_trainer.py,,create,#Any#Any#Any#Any#,27

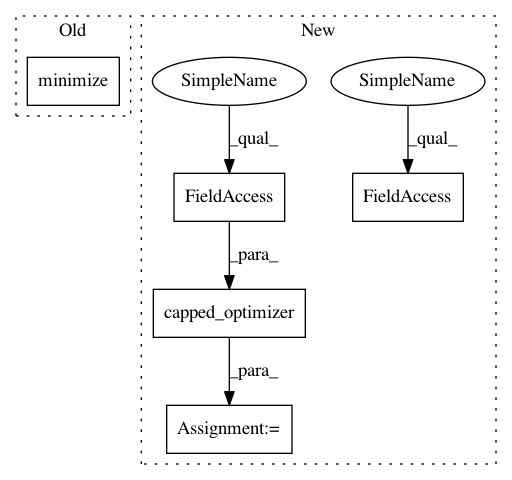

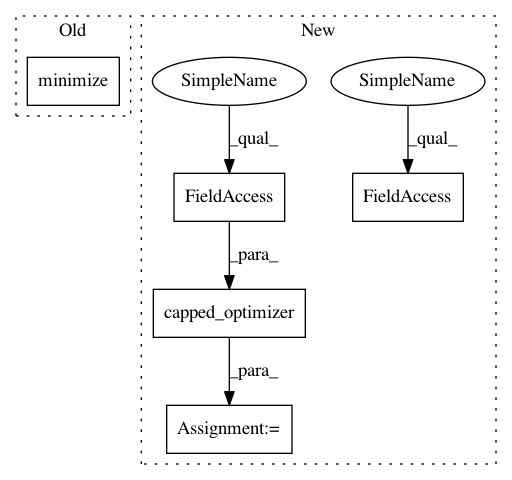

Before Change

d_lr = np.float32(config.discriminator_learn_rate)

gan.graph.d_vars = d_vars

g_optimizer = tf.train.GradientDescentOptimizer(g_lr).minimize(g_loss, var_list=g_vars)

d_optimizer = tf.train.GradientDescentOptimizer(d_lr).minimize(d_loss, var_list=d_vars)

return g_optimizer, d_optimizer

After Change

d_optimizer = tf.train.GradientDescentOptimizer(d_lr)

if(config.clipped_gradients):

g_optimizer = capped_optimizer(g_optimizer, config.clipped_gradients, g_loss, g_vars)

d_optimizer = capped_optimizer(d_optimizer, config.clipped_gradients, d_loss, d_vars)

else:

g_optimizer = g_optimizer.minimize(g_loss, var_list=g_vars)

d_optimizer = d_optimizer.minimize(d_loss, var_list=d_vars)

gan.graph.clip = [tf.assign(d,tf.clip_by_value(d, -config.d_clipped_weights, config.d_clipped_weights)) for d in d_vars]

return g_optimizer, d_optimizer

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 5

Instances

Project Name: HyperGAN/HyperGAN

Commit Name: c8f075268f7e5645a77eef21591a62a07e7e8baa

Time: 2017-02-28

Author: mikkel@255bits.com

File Name: hypergan/trainers/sgd_trainer.py

Class Name:

Method Name: create

Project Name: HyperGAN/HyperGAN

Commit Name: 74b3ecda9746966dcfb10fce64be9d5dda58a993

Time: 2017-02-18

Author: mikkel@255bits.com

File Name: hypergan/trainers/rmsprop_trainer.py

Class Name:

Method Name: create

Project Name: HyperGAN/HyperGAN

Commit Name: c8f075268f7e5645a77eef21591a62a07e7e8baa

Time: 2017-02-28

Author: mikkel@255bits.com

File Name: hypergan/trainers/sgd_trainer.py

Class Name:

Method Name: create

Project Name: HyperGAN/HyperGAN

Commit Name: 74b3ecda9746966dcfb10fce64be9d5dda58a993

Time: 2017-02-18

Author: mikkel@255bits.com

File Name: hypergan/trainers/adam_trainer.py

Class Name:

Method Name: create