99abcc6e9b57f441999ce10dbc31ca1bed79c356,ch15/04_train_ppo.py,,,#,55

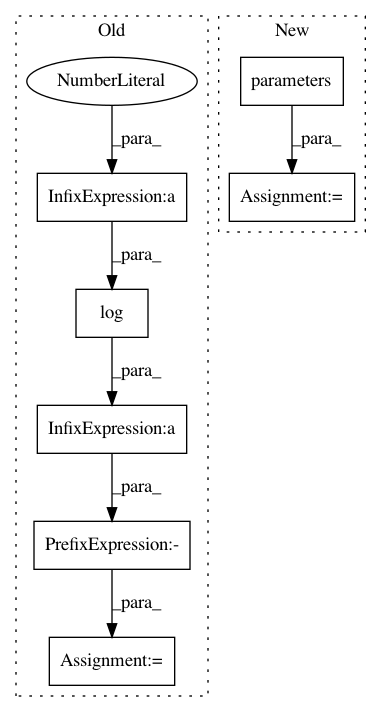

Before Change

surr_obj_v = adv_v * torch.exp(logprob_pi_v - logprob_old_pi_v)

clipped_surr_v = torch.clamp(surr_obj_v, 1.0 - PPO_EPS, 1.0 + PPO_EPS)

loss_policy_v = -torch.min(surr_obj_v, clipped_surr_v).mean()

entropy_loss_v = ENTROPY_BETA * (-(torch.log(2*math.pi*var_v) + 1)/2).mean()

loss_v = loss_policy_v + entropy_loss_v + loss_value_v

loss_v.backward()

optimizer.step()

tb_tracker.track("advantage", adv_v, step_idx)

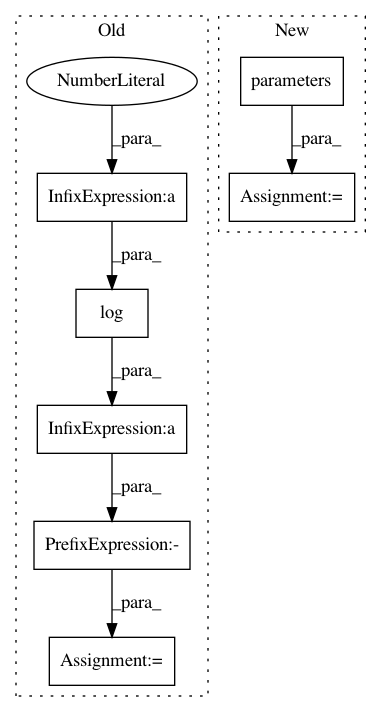

After Change

exp_source = ptan.experience.ExperienceSourceFirstLast(envs, agent, GAMMA, steps_count=REWARD_STEPS)

opt_act = optim.Adam(net_act.parameters(), lr=LEARNING_RATE_ACTOR)

opt_crt = optim.Adam(net_crt.parameters(), lr=LEARNING_RATE_CRITIC)

batch = []

best_reward = None

with ptan.common.utils.RewardTracker(writer) as tracker:

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 7

Instances

Project Name: PacktPublishing/Deep-Reinforcement-Learning-Hands-On

Commit Name: 99abcc6e9b57f441999ce10dbc31ca1bed79c356

Time: 2018-02-10

Author: max.lapan@gmail.com

File Name: ch15/04_train_ppo.py

Class Name:

Method Name:

Project Name: leftthomas/SRGAN

Commit Name: 675d1e61ca2cac2e6d09781e1c8406466bc32867

Time: 2017-12-02

Author: leftthomas@qq.com

File Name: train.py

Class Name:

Method Name:

Project Name: PacktPublishing/Deep-Reinforcement-Learning-Hands-On

Commit Name: 4296a765125fff6491892a1bb70fb32ac516dae6

Time: 2018-02-10

Author: max.lapan@gmail.com

File Name: ch15/01_train_a2c.py

Class Name:

Method Name: